)

High Load Architecture Explained: Scalable Web Application Design Guide

High load architecture is often misunderstood as something only large-scale platforms need. In reality, it becomes relevant much earlier - at the point where growth starts exposing the limits of your system. Performance degradation, unstable behavior under concurrent users, and rising infrastructure costs are not random issues. They are signals that architecture is no longer aligned with business demand.

For businesses, high load architecture means designing a system that can handle increasing traffic, evolving data structures, and unpredictable usage patterns without constant rework. It is not about preparing for millions of users from day one, but about making decisions that allow your system to scale without breaking or becoming unmanageable.

This is where most teams struggle. Some delay architectural changes until it is too late, leading to outages and expensive refactoring. Others overengineer too early, introducing distributed systems, microservices, and unnecessary complexity before the product is validated.

In this article, we break down how high load architecture actually works in practice. You will see how to approach system design without overengineering, when to introduce distributed patterns, and how to build a scalable web application that can handle real-world growth.

Why High Load Architecture Is a Business Decision, Not Just a Technical One

When people hear “high load architecture”, they often imagine something deeply technical - distributed systems, Kubernetes clusters, microservices, and complex infrastructure diagrams.

But for businesses, high load architecture means something much simpler and more critical: your product continues to work when growth actually happens.

It is not about handling millions of users from day one. It is about ensuring that when your product starts gaining traction, you do not lose users due to slow performance, crashes, or inconsistent behavior.

A scalable web application is not built accidentally. It is the result of deliberate architectural decisions made early - or consciously postponed until they are truly needed.

Monolith vs Microservices: Choosing Without Overengineering

One of the most misunderstood topics in system design is the choice between monolithic and microservices architecture.

Why Most Products Should Start as a Monolith

At early stages, speed matters more than scalability.

A well-structured monolith allows teams to:

move faster

reduce development complexity

avoid unnecessary infrastructure costs

validate product-market fit without technical overhead

For businesses, this means lower initial investment and faster iteration cycles.

The mistake many startups make is assuming they need a high load system architecture from day one. In reality, most products do not have enough traffic or concurrent users to justify that complexity.

When Microservices Actually Make Sense

Microservices become relevant when your system starts to show real scaling pressure:

different parts of the system need to scale independently

teams grow and need separation of ownership

performance bottlenecks appear in specific services

deployment cycles slow down due to system size

At this point, scaling a web application requires breaking down the system into smaller, independent services.

However, microservices introduce new complexity:

network failures between services

data consistency challenges

increased DevOps overhead

This is why the transition should be driven by real needs, not trends.

Stateless vs Stateful Systems: The Hidden Scalability Factor

Another critical architectural decision is how your system handles state.

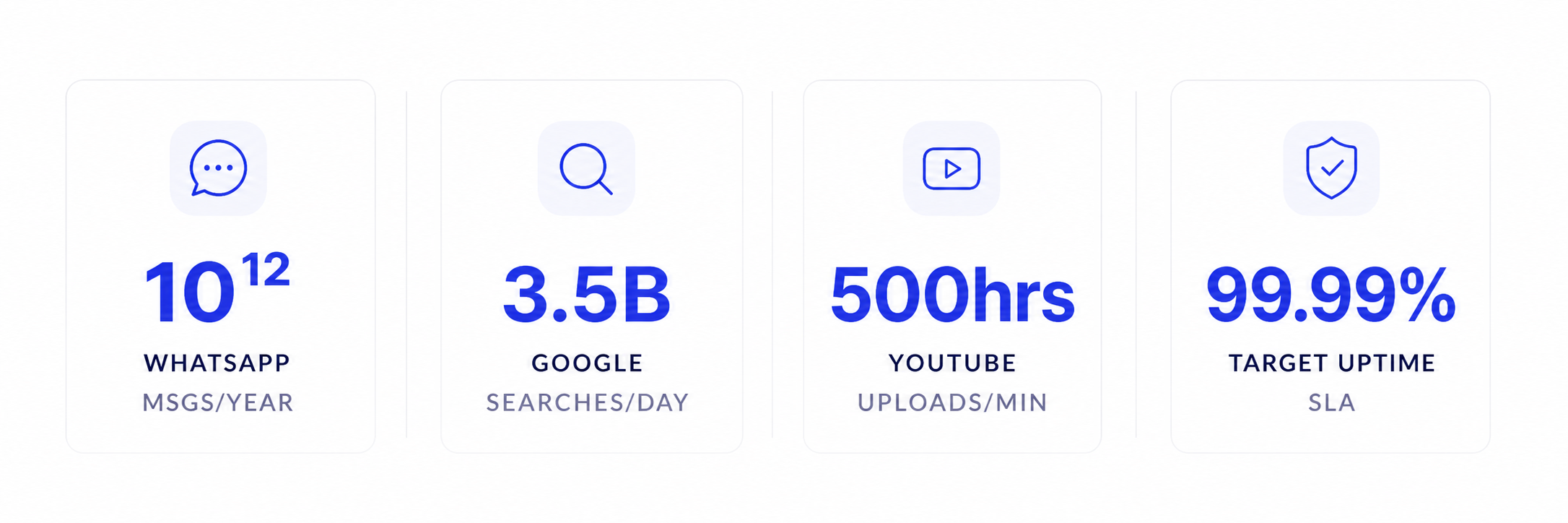

Examples of high load systems:

Why Stateless Systems Scale Better

A stateless system does not store user session data on the server. Each request is independent.

This allows:

easy horizontal scaling

better load distribution

simpler infrastructure

improved fault tolerance

For example, if you need to handle high traffic, stateless services can be replicated instantly across multiple servers without worrying about user session consistency.

Where Stateful Systems Are Still Needed

Stateful components are still essential in many cases:

databases

real-time systems

transactional processes

The key is to isolate stateful parts and keep the rest of the system stateless.

For businesses, this directly impacts system scalability and operational cost.

Core Metrics Behind Scalable Systems

Before diving deeper into fault tolerance and distributed system design, it is important to understand how scalable systems are actually evaluated in real-world conditions.

For businesses, high load is not just about traffic. It is about how the system behaves under pressure - how fast it responds, how reliably it works, and how well it handles failures.

These core metrics define whether your architecture can truly handle growth or not.

| Metrics | Description |

|---|---|

| Availability | The percentage of time a system is operational. Often expressed as "nines": 99.9% means ~8.7 hours of downtime per year; 99.999% ("five nines") means ~5 minutes. |

| Reliability | The probability that a system performs its intended function correctly over a period of time. Distinct from availability - a system can be up but returning wrong results. |

| Latency | The time it takes for a single request to complete, usually measured in milliseconds at various percentiles (p50, p95, p99). |

| Throughput | The number of requests or operations a system can handle per unit of time (requests per second, writes per second). |

| Consistency | Whether all nodes in a distributed system see the same data at the same time. Strong consistency guarantees this; eventual consistency allows temporary divergence. |

| Fault Tolerance | The ability of a system to continue operating - possibly in a degraded state - when one or more components fail. |

Avoiding Single Point of Failure: The Core Principle of High Load Systems

A system fails not when something breaks - but when one thing breaking takes everything down.

This is called a single point of failure.

What Causes It

one database instance

one server handling all requests

tightly coupled services

lack of redundancy

Even a simple scalable web application can fail under load if it relies on a single critical component.

How to Design Around It

High load architecture always includes:

redundancy

load balancing

failover mechanisms

distributed components

The goal is simple: no single failure should stop the system.

This is one of the most important differences between a regular application and a system designed to handle high traffic.

Event-Driven and Distributed Systems: Handling Growth Without Breaking

As traffic grows, synchronous systems start to fail.

When every action depends on immediate responses, the system becomes fragile under load.

Why Event-Driven Architecture Matters

Event-driven systems decouple components.

Instead of direct communication:

one service emits an event

another service processes it asynchronously

This allows:

better scalability

smoother handling of traffic spikes

reduced system dependencies

For example, sending emails, processing payments, or generating reports should not block user actions.

Distributed Systems as a Foundation

To handle large numbers of concurrent users, systems must distribute workload across multiple nodes.

This includes:

multiple application servers

distributed databases

caching layers

message queues

Caching layers - typically Redis - are essential for reducing database pressure and keeping response times stable under high concurrency.

Scaling a web application at this level is no longer about adding resources - it is about distributing responsibility.

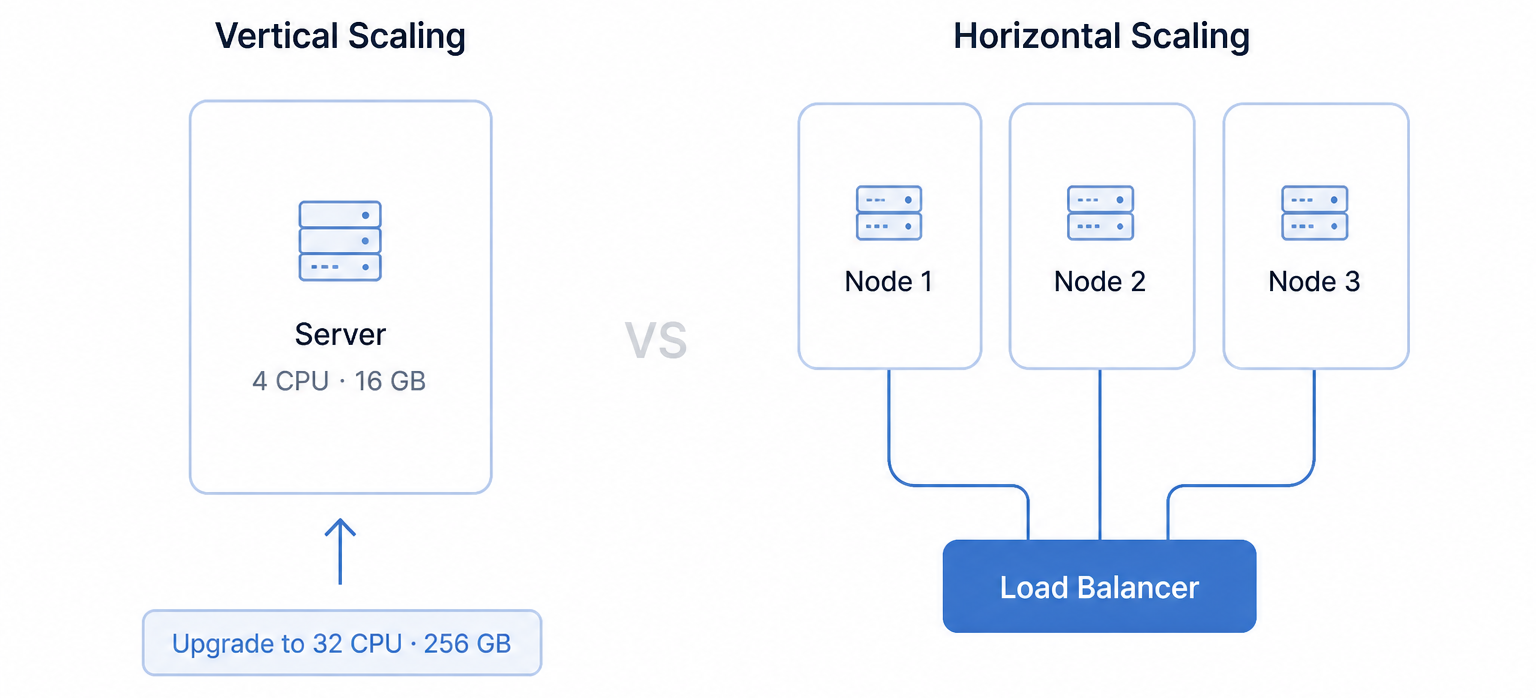

Horizontal vs Vertical Scaling: The Real Trade-Off

At some point, every growing system faces a fundamental choice.

Vertical Scaling (Scale Up)

increase server power

simple to implement

limited long-term

This works well in early stages.

Horizontal Scaling (Scale Out)

add more servers

distribute traffic

requires proper architecture

Horizontal scaling is the foundation of any high load system architecture.

For businesses, this is where system scalability becomes a strategic investment rather than a technical upgrade.

Vertical scaling vs Horizontal scaling

Vertical scaling vs Horizontal scaling

Build it right before you scale it!

Talk to BineralsHigh Load Architecture Is About Trade-Offs, Not Perfection

There is no universal architecture that fits every product.

Every decision involves trade-offs between:

performance

cost

complexity

development speed

For businesses, high load means:

choosing what to optimize now

delaying what is not needed

preparing for growth without overpaying for it

This is why experienced teams treat high load system development as a strategic process, balancing performance, cost, and complexity instead of blindly following architectural trends.

Conclusion

High load architecture is not about building systems for millions of users from day one. It is about building systems that can become scalable when needed without breaking or requiring a complete rewrite.

The biggest mistake is not underengineering - it is premature overengineering. Many products fail not because they could not scale, but because they became too complex too early.

A scalable web application starts with a clear understanding of business goals, realistic traffic expectations, and a flexible architecture that evolves over time.

The most successful systems are not the most complex ones. They are the ones that scale at the right time, in the right way, with the right level of investment.

Scaling soon? Do it without rewriting everything.

Start your project